The evaluation results based on the objective quality metrics have proved that the hybrid equi-angular cube-map format is the most appropriate solution as a common format in 360° image services for where format conversions are frequently demanded.

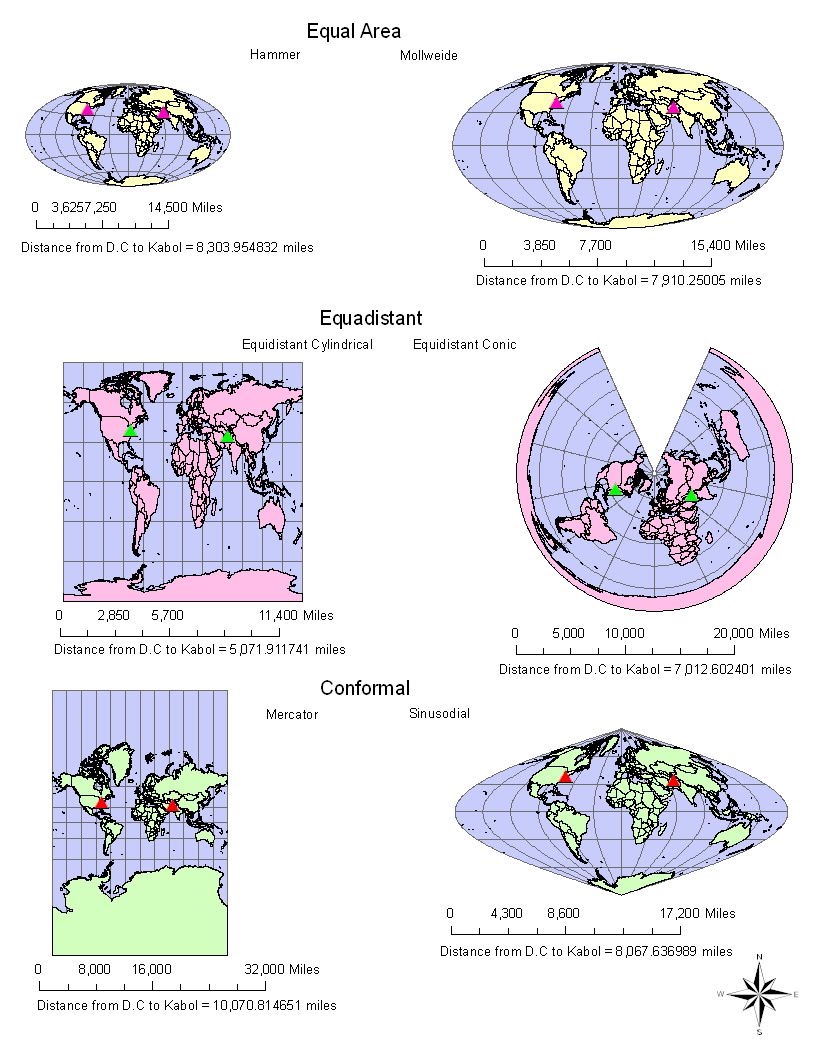

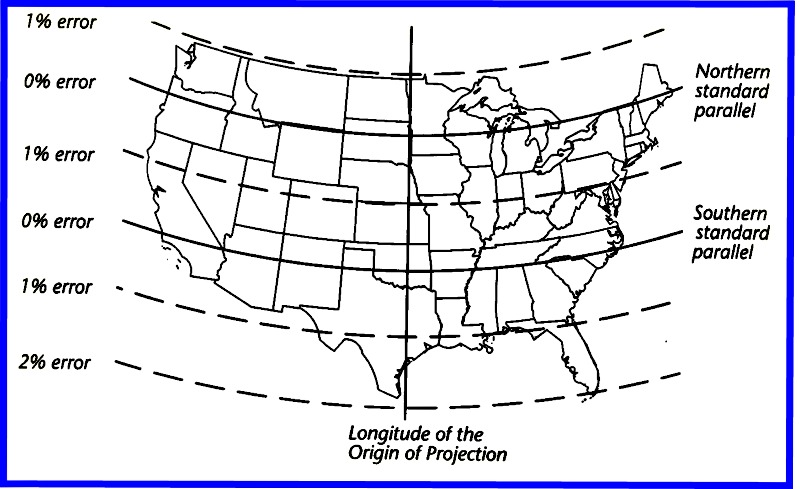

The evaluation is conducted using sample images selected based on several attributes that determine the perceptual image quality. The performances of various projection formats, including equi-rectangular, equal-area, cylindrical, cube-map, and their modified versions, are evaluated based on the conversion causing the least amount of distortion when the format is changed. In this paper, various projection formats are compared to explore the problem of distortion caused by a mapping operation, which has been a considerable challenge in recent approaches. However, standards for a quality assessment of 360° images are limited. Many projection formats have been proposed for 360° videos. The captured images are spherical in nature and are mapped to a two-dimensional plane using various projection methods. Since ball-and-stick models are often a favourite starting point for physicists and chemists interested in 3D graphics, I've collected the formulas needed to do the above with cylinders (without end caps, with flat end caps, or with spherical end caps) to my Wikipedia user page.Currently available 360° cameras normally capture several images covering a scene in all directions around a shooting point. In general, pick the sign that yields the smaller, but positive, $R$. If we are outside the sphere, use $-$ above if we are inside the sphere, use $+$ above.

Therefore, the 2D coordinates of that detail on the window are Let's say one of the 3D coordinates of an interesting detail, say a corner of the greenish cube above, are $(x, y, z)$. In a very real sense, those coordinates are obtained by linear interpolation, except that one end of the line segment is at the eye (which we already decided is the origin, so coordinates $(0, 0, 0)$, the other end is at the 3D coordinates of the detail we wish to project, and the interpolation point is where that sight line (usually called "ray") intersects the view plane (the window, in our case). The blue pane is the window, the eye is at the lower left corner, and we are interested in the projected coordinates (projected to the window, that is) of the four corners of some cube at some distance. Here is a rough diagram of the situation: These coordinates are what OP needs to draw 3D pictures to a 2D surface. If we know the 3D coordinates in the above coordinate system of interesting details outside, 3D projection tells us their coordinates on the surface of the window. Thus, the center of the window is at $(0, 0, d)$, where $d$ is the distance from the eye to the window. Using OP's conventions, $x$ axis increases up, $y$ axis right, and $z$ axis outside the window. If you stand in the center of the window, looking out through the center of the window, then we can treat the center of your eye (more precisely, the center of the lens in the pupil of your dominant eye) the origin in 3D coordinates. Let's assume you stand in front of a window, looking out. It is all based on optics, and (linear) algebra. The hard part is understanding how it is done and that is what I shall try to explain here.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed